As an Azure developer that specialises in building integration solutions using the Azure Cloud Platform, I typically work to provide integrated connectivity and functionality between enterprise systems. This can include existing on-premises systems (including legacy) as well as more contemporary workloads that run in the cloud (e.g. IaaS, PaaS or SaaS).

I am also lucky enough to have had a few years’ experience as a cyber-security consultant. During this time, I learned a lot about information security and the associated cyber security programs that are put in place to protect organisations.

When working on integration solutions, I observed that they naturally contain several connections to various resources such as databases, cloud storage repositories, file systems and deployment infrastructure, for example, Continuous Integration / Continuous Deployment (CI/CD) servers. These connections may be authorised to access potentially sensitive information. This means they are of particular interest to those responsible for managing cyber security within an organisation and would likely need to comply with the requirements outlined by an overall Cyber Security Program.

Cyber Security Programs focus on risk management, that is, they aim to identify the likelihood of threats and vulnerabilities being exploited in order to impact the confidentiality, integrity and availability of information systems which in turn have adverse consequences to the business. Once these risks are identified, mitigating controls need to be implemented to achieve acceptable residual risk.

Obviously, in practice this process of risk management can be vast and complex and in no way can be covered in a single blog. However, one of the recurring risk areas that I often see is related to access control. More specifically, risks associated with how systems are authenticated and authorised to access the resources and services required by the solution - a security topic that I also happen to be particularly interested in.

So, in this blog, I will outline some fundamental principles that can be followed when developing web applications in Microsoft Azure. These will help secure access to resources and services and mitigate risk by ensuring that secrets cannot easily be compromised. I’ll also provide a working example that outlines an insecure approach versus a more secure approach, which can be used as a reference for keeping your secrets secret in Azure!

Firstly, what constitutes a secret?

Secrets are typically pieces of information in the form of a key that facilitate access to protected resources, information and/or services through authentication and authorisation. Other forms of secrets are also possible (e.g. digital signing keys or symmetric encryption keys). However, for the purposes of this blog, I’ll focus on secrets related to access control.

Examples of secrets that must be kept secure to ensure that authentication and authorisation-based risks are effectively mitigated include:

- Basic authentication credentials

- Client ID and secret credentials for OAUTH token acquisition

- Resource connection strings (especially the “key” part)

- Authorisation keys such as API gateway subscription keys

- Asymmetric encryption private keys (e.g. SSH private key or X.509 private certificate keys)

My Baseline Secure Secret Principles

Below I’ve articulated six secure principles that are derived from my own experience that should be complied with as a minimum. These principles are relativity easy to implement and provide a solid baseline level of security that will reduce the risk of a security incident while not over-impacting development effort.

Follow these six principles and you’ll be on your way to having a happy Security Officer come audit time!

My six principles that help keep your secrets secret are:

- Don’t add secrets directly to the application settings of an Azure App Service

- Always store secrets in a secure vault (e.g. Azure Key Vault)

- Do not echo secrets to the host in clear text when using them across CI/CD stages and jobs or when passing as command line parameters

- Do not output secrets in ARM templates unless they are of type SecureString or SecureObject

- Ensure secrets never end up in source control, either via direct hard coding or via configuration files

- Enable Managed Identities where possible1

Practical secrets management: A working example

In order to demonstrate how non-compliance can occur with one or more of the principles given above, I’ve created two simple solutions that both achieve the same functionality and output. That is, each solution:

- Deploys a Key Vault, Storage Account, App Service Plan, Application Insights Instance and Function App

- Creates a configuration table within the Storage Account for use by the Function App

- Gives the Function App access to the resources via the application settings

- Sets up a Client ID and Client Secret (dummy only) for use by the Function App to obtain an OAuth token from Azure Active Directory

The secure version of the solution protects the secrets in accordance with the defined principles, while the insecure version exposes secrets in various ways which I will discuss in the remaining sections below.

For reference, the full source code can be found on GitHub here: https://github.com/timnicol/azure-webapp-secrets-demo

Exposing Secrets in Application Settings

Although application settings are encrypted at rest and in transit by default within Azure, they can be seen by anyone with read or greater access to the resource as well as being exposed as environment variables within the host. This can make it difficult to ensure they are kept secret.

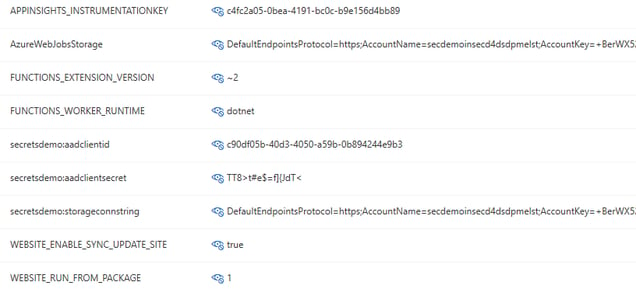

Below is a snippet of the insecure implementation – AVOID DOING THIS!

This results in the following application settings:

With these settings, any service or user that has read (or greater) access to the web application can view these secrets via multiple avenues, for example, viewing directly within the Azure portal or by running the “az webapp config appsettings list” Azure Command Line Interface (CLI) command.

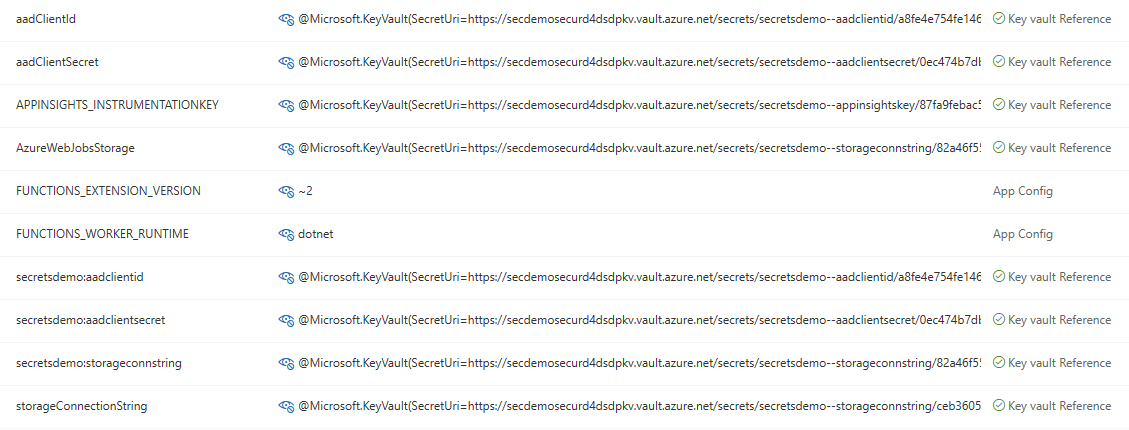

A more secure way to achieve the same functionality (also my personal preference) is to utilise Key Vault References as demonstrated in the following snippet:

As seen here on line 22, the URL reference is obtained by querying the secret ID, which results in the following application settings:

For more detailed information on Key Vault references, check out: https://docs.microsoft.com/en-us/azure/app-service/app-service-key-vault-references

By having secrets in application settings as references to Key Vault URLs, one effectively eliminates the risk of exposing these secrets by simply viewing application configuration within the Azure Portal or via the Azure CLI.

Exposing secrets in logs by writing to host command line

Accidentally exposing secrets in Azure DevOps logs during deployment is surprisingly easy, so it is important to take care when developing CI/CD pipeline scripts. Specifically, the rule I’ve outlined in this blog is to not echo secrets on the command line, or pass secrets directly as parameters, as they are likely to end up within the logs. Let’s look at the example of this within the secrets demo solution.

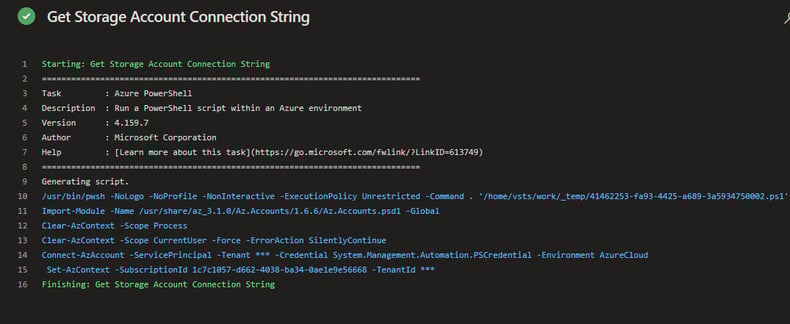

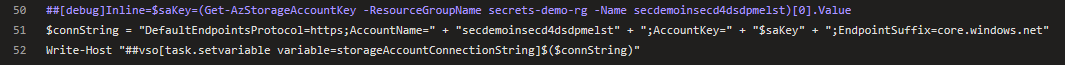

In the following snippet from the insecure yaml pipeline, the secrets (i.e. connection string) are echoed to Azure DevOps variables that can be later used by the Azure CLI to set up the application configuration table – AVOID DOING THIS!

On the surface this looks like an effective and efficient way to get the job done. In fact, even looking at the log output initially, there doesn’t seem to be any problem.

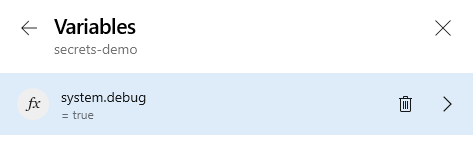

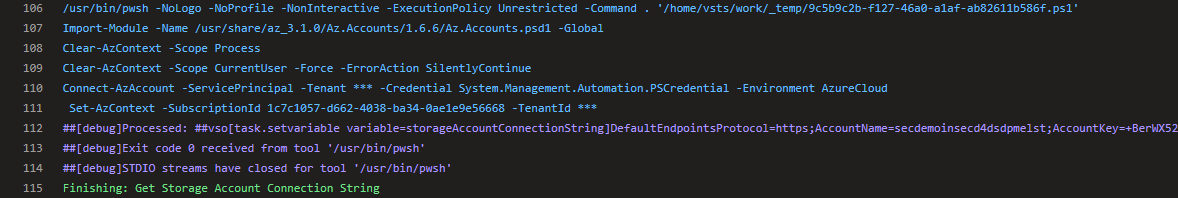

However, look what happens once System Debug is enabled (by setting the variable system.debug=true) when running the pipeline. The secrets are lit up in the log like a Christmas tree – definitely not what we want.

To mitigate this risk, one must take care not to echo any secrets to the command line in clear text. The best way to ensure this is to obtain the secret securely from Key Vault within each deployment task separately and use it within that scope, eliminating the need to echo to a pipeline variable. If writing to an Azure Pipelines variable is required, then make sure that issecret=true is set, which will mask the secret in the DevOps log, but beware that this may be logged in clear text at an operating system level (refer to https://docs.microsoft.com/en-us/azure/devops/pipelines/process/variables?view=azure-devops&tabs=yaml%2Cbatch#secret-variables). Both of these techniques are shown in the following snippet. Line 11 & 22 shows saving the secret and then retrieving it at the time to use it, while line 12 & 23 shows setting a pipeline variable with the secret and then using it directly.

This results in the following log, which does not expose the secrets:

Exposing secrets in logs via ARM template outputs

ARM template outputs are a handy way to share dependent details of Azure resources between resource deployments. For example, you may want to output the Resource ID of an App Service Plan that is then used by a Function App deployment to decipher which plan to deploy to. However, it may also be tempting to output other dependent information such as connection strings, that can be used by subsequent ARM templates.

This exposes secrets both in logs and also in the deployment history in the Azure portal.

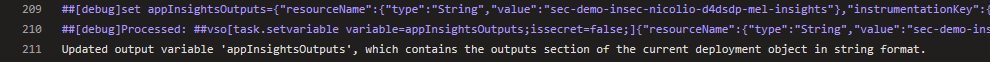

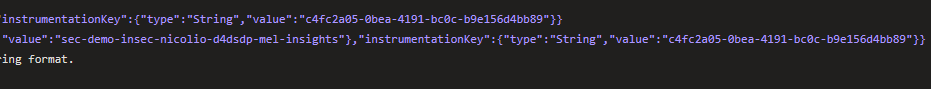

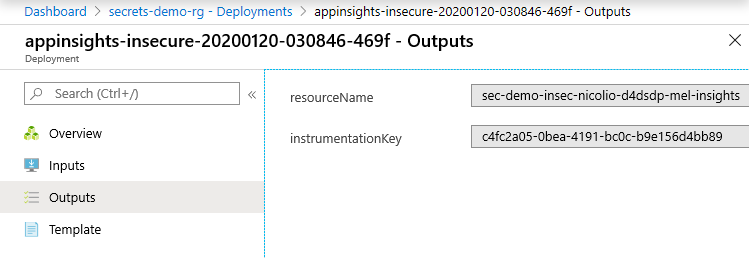

The following snippet shows outputting a resource secret as an ARM output (in this case the Application Insights instrument key) – AVOID DOING THIS!

Which results in the secret being exposed in the CI/CD log (with system debug enabled):

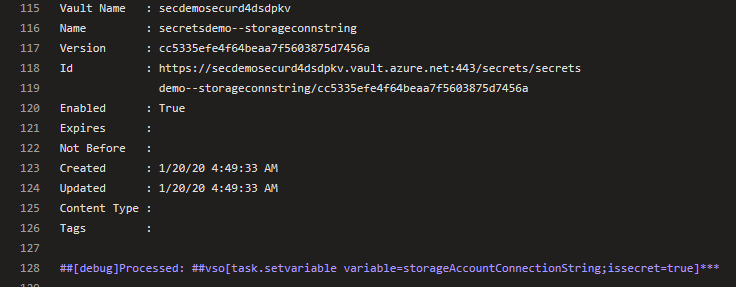

As well as in the deployment logs:

A more secure way to do this is to add the secret to the Key Vault during deployment and then include it as a Key Vault reference in the application, as demonstrated by the following snippet:

Exposing secrets in source files

Secrets that are hard coded into source files end up being available to anyone with read access to the source code repository (which could potentially be a high number of users!). This is probably the most obvious “no-no” for developers, so if this does occur it is usually unintentional. A common mistake would be when developers accidentally check in their local settings files (such as local.settings.json) used for development that contain various secrets.

Once source files containing secrets are checked in, they can go undetected and can be a pain to remove retrospectively, so the best control here would be to establish a good process that detects secrets in source code in addition to minimising the chance they will end up there in the first place.

Some initial controls to establish such a process include:

- Create a standardised “.gitignore” template that masks out local settings files

- Use a static source scanner to detect secrets in code

- Provide developers with security awareness training to ensure that not hard coding secrets is front of mind

Conclusion

Security in application development is undoubtedly complex and can be notoriously difficult to manage holistically. The conversations can seemingly go on forever with progress remaining questionable. That’s why I like to take the approach of breaking it down into defined actionable controls and standards that aim to reduce the risk of an incident by minimising vulnerabilities and decreasing the likelihood of a threat actor being successful. This blog outlines some baseline controls necessary to minimise authentication and authorisation vulnerabilities by keeping secrets secret within Azure. There are indeed many other security considerations necessary to achieve a well secured environment. However, I hope that what I have outlined in this blog can assist with your overall journey to higher levels of security maturity.

1Azure Managed Identities is a feature of Azure Active Directory that allows for automatic handling of an applications identity for connections to other Azure services, removing the need to handle secrets for authentication and authorisation directly in your code. Microsoft has good documentation on this feature on their documentation site https://docs.microsoft.com/en-us/azure/active-directory/managed-identities-azure-resources/.